Volkswagen to use XPENG’s VLA 2.0 intelligent driving system in China

KUALA LUMPUR: XPENG has laid out a clearer timeline for the next stage of its intelligent driving push, confirming that its new VLA 2.0 system is planned for global delivery in 2027, while Robotaxi trial operations in China are set to begin later this year.

KEY TAKEAWAYS

What is XPENG VLA 2.0?

VLA 2.0 is XPENG’s next-generation intelligent driving system designed to support advanced autonomous driving capabilities.When will XPENG VLA 2.0 be available?

XPENG says global deployment of the system is scheduled to begin in 2027.Will Robotaxis use the VLA 2.0 system?

Yes. XPENG has already started public road testing Robotaxis equipped with VLA 2.0, with trial operations planned to begin later this year.The Chinese carmaker made the announcement during its “The Future” VLA Media Experience Day in Guangzhou on March 2, where it detailed both the technical architecture behind the system and how it plans to roll it out beyond China.

Also Read: XPENG confirms Europe and ASEAN supply chain localisation teams for 2026

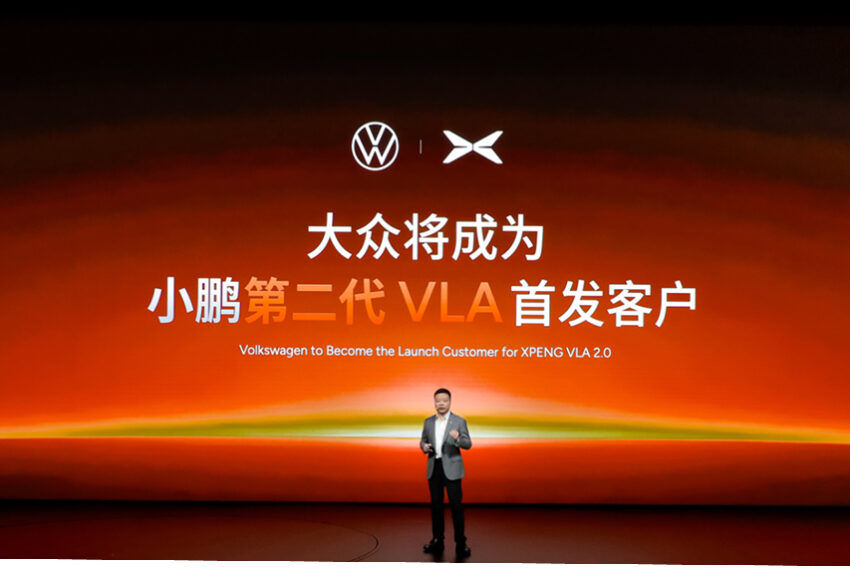

One of the biggest headlines from the event was Volkswagen being named the first customer for XPENG’s next-generation intelligent driving system in the Chinese market.

That is a notable development on its own, because it shows XPENG is not only building this technology for its own vehicles, but is also positioning itself as a supplier of advanced driving systems to other major carmakers.

According to XPENG, its Robotaxi fleet fitted with VLA 2.0 has already entered public road testing. Trial operations are expected to begin in China before the end of 2026, with international road testing to follow as the company works towards a wider global rollout in 2027.

At the centre of this push is VLA 2.0 itself, which XPENG describes as a major step forward from more conventional intelligent driving systems. Instead of relying on a traditional modular setup where perception, reasoning and action are split into separate layers, VLA 2.0 brings those elements together within a single AI foundation model.

In simpler terms, the system is meant to process what it sees, understand what is happening around it, and make a driving decision more directly, without as many intermediate steps slowing things down. XPENG says this approach improves reaction speed, broadens the system’s ability to deal with different scenarios, and reduces dependence on high-definition maps and rigid rule-based programming.

The company says the new setup is built to better interpret messy real-world situations, not just clean demo conditions. That includes things like dense city traffic, mixed road users, accident scenes, badly surfaced roads and vehicles behaving unpredictably. XPENG claims the system can respond in a way that increasingly resembles an experienced human driver, with the aim of making assisted driving feel smoother, more stable and less awkward.

He Xiaopeng said: "XPENG's VLA 2.0 is the first version designed to achieve full autonomous driving and will iterate at an unprecedented pace. We believe that full autonomy will arrive within the next one to three years, making autonomous driving a natural part of people's daily travel."

XPENG is also pitching VLA 2.0 as a more complete assisted driving solution rather than one that only works well in carefully defined situations.

The company says the system is capable of operating across a wider range of routes and surfaces, including campus roads, rural dirt paths and areas that are not typically covered by standard navigation systems.

It is also said to handle tricky real-world obstacles such as narrow lane sections and pothole avoidance, while supporting movement from a standstill, something XPENG describes as part of a full-process assisted driving experience.

Another claim made by the company is that VLA 2.0 improves driving efficiency by 23%. In tests conducted during evening rush hour in Guangzhou, XPENG says the system outperformed more conventional Level 2 intelligent driving systems as well as existing Robotaxi models in traffic flow efficiency, with results said to be comparable to experienced human drivers.

From a technical standpoint, XPENG says VLA 2.0 marks a shift away from the usual vision-language-action pipeline used in many AI driving models. In those systems, visual input is first interpreted, then converted into a language-based reasoning process before the car makes a driving decision.

With VLA 2.0, XPENG says it has moved to an end-to-end vision-to-action architecture. That means the system can go more directly from seeing the road environment to making driving decisions, cutting out extra translation layers in between. The expected benefit is faster response, lower complexity and better overall efficiency.

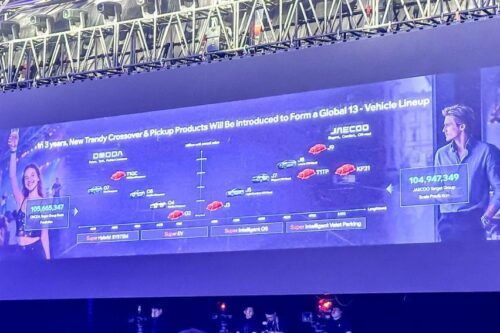

XPENG also made it clear that this AI architecture is not being developed only for passenger cars. The same core foundation model behind VLA 2.0 is being extended to other embodied AI applications, including Robotaxi fleets, humanoid robots and modular flying vehicle systems.

That broader expansion sits under the company’s Physical AI strategy, which appears to be aimed at building one shared intelligence base that can work across multiple platforms instead of being limited to cars alone.

As far as announcements go, this one was less about a new production model and more about where XPENG sees its future business heading.

Between the 2027 delivery target, public road testing of Robotaxis, and Volkswagen coming in as the first customer in China, the company is clearly trying to position VLA 2.0 as something bigger than just another assisted driving update.

Also Read: XPENG G6 Standard Range launched for Malaysia - RM158,888, 68.5 kWh, up to 480 km

XPENG Car Models

Malaysia Autoshow

Trending & Fresh Updates

- Latest

- Popular

You might also be interested in

- News

- Featured Stories

XPENG Featured Cars

- Latest

- Popular